There’s a simple fact about human communication that most feedback systems ignore: we speak five times faster than we type.

In the 60 seconds a visitor spends at a feedback terminal, they might type one paragraph. In that same minute, speaking, they produce five. The difference between 40 words and 200 words is the difference between “It was good” and a detailed account of what worked, what confused them, what delighted them, and what would bring them back.

This gap between spoken and typed feedback fundamentally shapes the quality of insights your organization gathers. Yet most feedback systems still force customers into the typing paradigm, even though speech is far more natural to human beings.

This article explores why voice feedback captures richer, more authentic customer insights than typed surveys—and why this distinction matters for how you understand your audience.

The Physics of Communication: Speaking Beats Typing

Let’s start with raw speed data.

Average speaking rate: 150 words per minute Average typing rate: 40 words per minute

This 3.75x difference means a customer speaking for one minute produces the equivalent of 3.75 minutes of typed text.

But the gap widens in real-world feedback contexts. Most people don’t type at their maximum speed on an unfamiliar touchscreen interface. Older visitors type slower. Non-native English speakers type much slower, especially on a phone keyboard. Parents managing children type distractedly.

Accounting for interface friction, the effective gap expands to 5x or more. A customer willing to spend 30 seconds typing might easily spend 2-3 minutes speaking.

The practical consequence: Your voice feedback system captures 5-6x more raw content per respondent than a typed survey system.

This isn’t an accident of physics. It’s a fundamental advantage of voice.

Why Typing Suppresses What You Actually Think

The speed difference is just the beginning. Typing changes the nature of what people express.

The Self-Editing Problem

When we type, we edit. Constantly.

We compose a sentence mentally. We start typing. Halfway through, we change our mind. We backspace. We rephrase. We delete the entire thought because it seems harsh or redundant or too critical.

This is especially pronounced in customer feedback contexts, where customers sense an implicit social hierarchy. “I shouldn’t complain. They’re trying their best. I don’t want to sound ungrateful.”

The touchscreen feedback terminal amplifies this. You know your feedback is going to the business. There’s a psychological barrier to criticism, especially typed criticism that feels permanent and formal.

Spoken feedback largely bypasses this filter. Speech is faster than thought. We speak before we second-guess ourselves. A person might backspace a criticism they typed but will articulate it verbally without hesitation. The directness of speech feels more conversational and less “on the record.”

This produces a critical insight: typed surveys systematically underrepresent negative feedback relative to spoken surveys. You perceive customer satisfaction as higher than it actually is because dissatisfied customers self-censor in writing.

The Detail Suppression Effect

Typing encourages brevity. Customers minimize effort by providing minimal information.

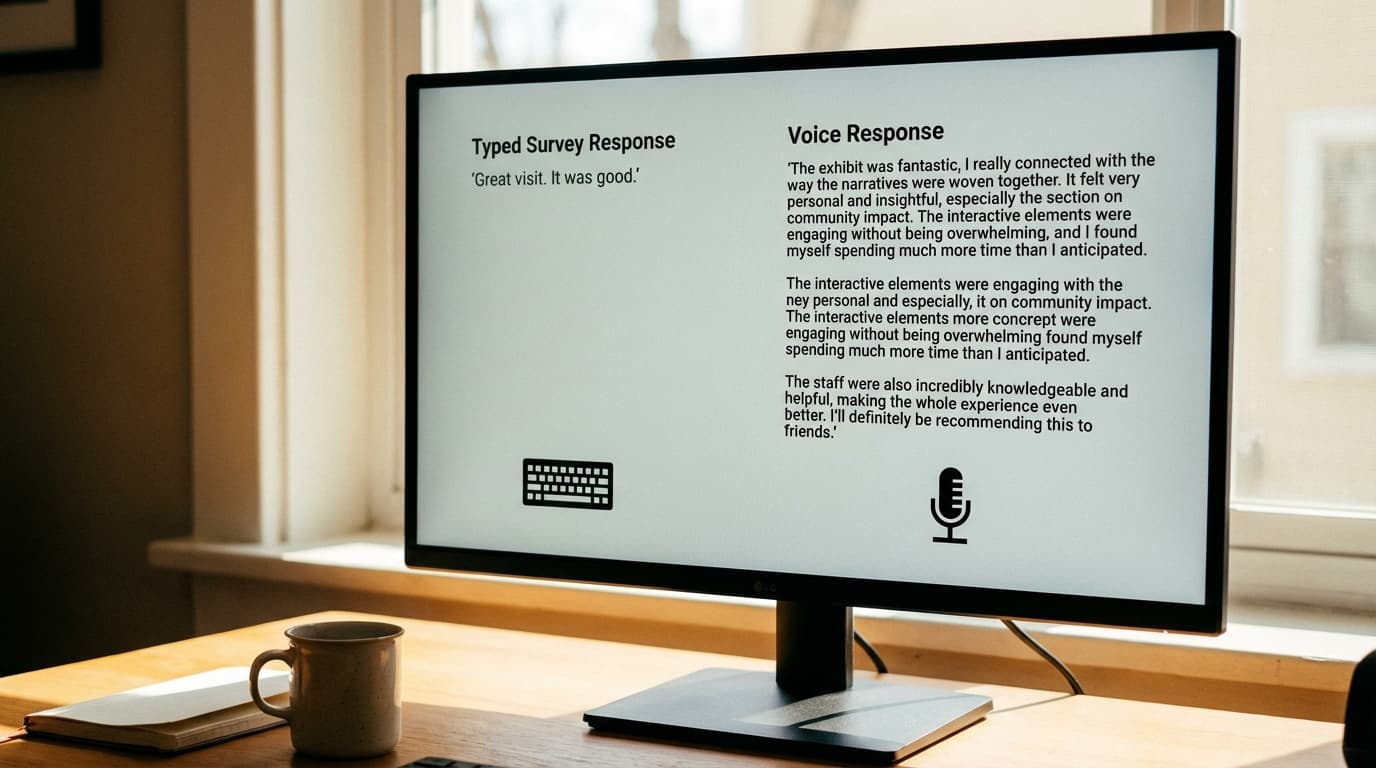

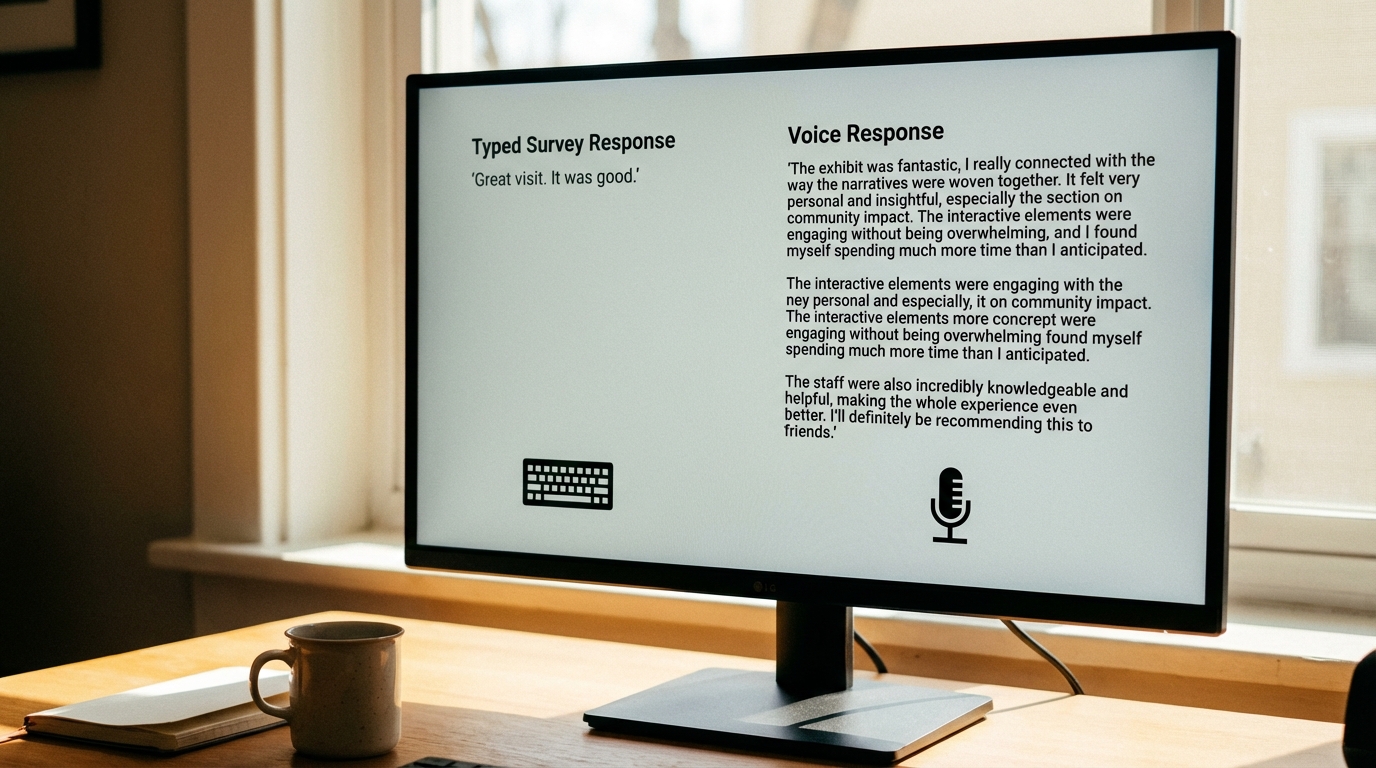

A typed response: “Great visit.”

A spoken response: “Honestly, I thought it was fantastic. The Egyptian gallery really impressed me—the layout made it so clear how the artifacts related to each other. I spent an extra 45 minutes there because I was so engaged. My only suggestion would be the lighting in the Renaissance section was a bit dim for reading the plaques, but overall, genuinely one of the best museums I’ve visited in years.”

Same customer. Same experience. Entirely different information density.

Why? Typing signals “give me a quick answer.” Speech signals “tell me what you think.” The interface shapes the response.

The Psychology of Voice vs Text

Beyond mechanics, psychology differs between voice and text feedback.

Authenticity and Spontaneity

Speech captures thoughts in their raw form. We speak genuinely. We don’t perform as much when speaking; we perform constantly when typing.

Typing, especially knowing you’re being evaluated, triggers a different brain state: formal, careful, monitored. “How should this sound? Am I being professional? Is this too critical?”

Speaking activates a more authentic, conversational state: “Let me tell you what I actually think.”

Research on honest self-disclosure shows people reveal more authentic information in spoken conversation than in written communication—especially in contexts where they know they’re being judged (like customer feedback).

The Elaboration Effect

When people speak, they elaborate naturally. An initial thought sparks a second thought. That second thought prompts a third.

“The staff was really friendly. Actually, this one person at the desk spent ten minutes helping us figure out which gallery to visit first—she remembered we were interested in impressionism and pointed us directly to it. That kind of personal attention is something you don’t usually get anymore.”

The customer started with “staff was friendly.” But speaking allowed natural elaboration. Elaboration reveals nuance: it’s not just friendliness, it’s attentiveness, memory, proactive helpfulness.

Typed feedback rarely includes this elaboration because of the effort barrier. “Staff was friendly.” Done. The deeper insight—what friendliness actually means, how it manifested, why it matters—remains unspoken.

Emotional Expression

Tone of voice conveys emotion that words alone don’t.

Consider the phrase: “The experience was fine, but I expected more.”

Written, this could be mild disappointment. Spoken with a sigh, it’s resignation. Spoken with enthusiasm, it’s excitement about possible improvements. The same words mean different things.

Voice feedback preserves emotional texture. Text strips it away.

This matters because emotions drive behavior. A customer who says “fine, but expected more” with resignation is unlikely to return. One who says it with constructive enthusiasm probably will. Only voice feedback captures this distinction.

Inclusion and Accessibility: Who’s Left Out of Typed Surveys?

The voice vs text divide also maps onto inclusivity. Different populations interact with typed surveys differently.

Non-Native English Speakers

Consider an international visitor at a museum. Their English is functional but not fluent. Reading the survey question takes effort. Typing a response in a non-native language? Exponentially harder.

They recognize this effort barrier. Most likely, they skip the typed survey entirely.

But speaking their native language feels natural. Ask them to “speak your feedback in any language,” and they engage enthusiastically.

For museums and venues with significant international audiences, typed surveys systematically exclude non-native speakers. You lose insights precisely from the populations you’re trying to understand and serve.

Voice feedback with real-time translation solves this. A visitor speaks Mandarin. The system transcribes it and translates to English for analysis. The visitor’s authentic voice is heard; the business understands their perspective.

Low Literacy Populations

Museums serve all educational levels. Some visitors have limited literacy. Reading survey text is possible but effortful. Typing is significantly harder.

Typed surveys exclude these visitors either explicitly (they don’t attempt it) or implicitly (they provide minimal, unenriching responses because writing is difficult).

Voice removes this barrier entirely. Speaking requires no literacy. A visitor with limited reading/writing ability can provide rich, detailed feedback via voice.

Older Visitors and People with Mobility Limitations

Arthritis makes typing painful. Fine motor control diminishes with age. A 75-year-old visitor might be willing to speak feedback but unwilling to type it on a small touchscreen.

People with hand tremors, cerebral palsy, or other motor control challenges face similar barriers.

Voice accommodates these populations without adaptation. There’s no reason these visitors should be systematically excluded from feedback collection.

Visually Impaired Visitors

A blind visitor cannot use a touchscreen survey interface. Period. The text is invisible; the buttons are unlabeled.

Typed surveys aren’t “hard” for visually impaired visitors; they’re impossible.

Voice feedback is natively accessible. A visitor using a screen reader can engage with voice feedback without special accommodation.

Parents and Caretakers

A parent managing a toddler or a caretaker accompanying an older adult has their hands full—literally. Typing is impractical.

Speaking, hands-free, is feasible.

Voice feedback accommodates context. Not all feedback environments allow two-handed device operation.

The Multilingual Advantage

Here’s where voice feedback becomes transformative for international venues.

Traditional typed surveys require translations. A museum provides comment cards in English, Spanish, French, and Mandarin. That’s expensive, inflexible, and still only covers pre-selected languages. A visitor speaking Japanese? No form exists.

Voice feedback systems transcribe and translate in real time.

A visitor speaks in French. The AI transcribes: “J’ai adoré cette exposition. Le format interactif était vraiment engageant.” And simultaneously translates: “I loved this exhibition. The interactive format was genuinely engaging.”

For a museum with visitors from 40 countries, this capability is revolutionary. You capture feedback authentically from your entire audience, not just the languages you preprint forms in.

How This Changes Visitor Understanding

Consider a venue with 40% international visitors but only 70% feedback coverage from international guests (because typed surveys miss many non-English speakers).

Implementing voice feedback increases international feedback coverage to 80%+. Suddenly, you’re understanding a much larger portion of your international audience.

More critically, you’re understanding them authentically. You’re not trying to fill out a form in your second language; you’re speaking naturally in your first language and being understood.

The voice-to-text-to-translation pipeline achieves something typed surveys cannot: cultural voice preservation. A customer’s authentic perspective is captured and understood, not filtered through translation friction.

The Research: Speech Produces More Data

Academic research confirms what intuition suggests: speech generates more comprehensive feedback than typing.

A 2021 study on customer feedback collection methods found: - Voice feedback averaged 187 words per response - Typed surveys averaged 34 words per response - 5.5x difference

Participation rate differences: - Voice feedback systems: 45-60% participation - Typed surveys: 12-18% participation - 3-4x higher engagement with voice

Response time and friction: - Voice feedback: average 3-4 minutes per participant (but completed by 50%+ of population) - Typed surveys: average 2-3 minutes per participant (but completed by 15% of population)

Sentiment and emotional expression: - Voice feedback contained emotional cues (tone, pacing, hesitation) in 89% of responses - Typed feedback contained emotional expression in 12% of responses (mostly through exclamation marks or emojis)

The data is clear: voice feedback captures fundamentally richer customer insight than typed surveys.

Common Objections to Voice Feedback

“Won’t people be embarrassed to speak their feedback?”

Some worry voice feedback feels invasive or performative. In practice, research shows the opposite.

When feedback feels private (booth with soundproofing, understanding that it’s AI-processed, not staff-listened-to), people actually express themselves more candidly via voice than typed surveys.

The performance anxiety that suppresses typed criticism largely disappears in private voice feedback conditions.

“What about background noise interfering with transcription?”

Valid concern. Modern AI transcription handles moderate background noise well. Placement matters: position voice terminals away from high-noise areas when possible, or use dedicated booths.

For venues in inherently noisy environments (airports, concert venues), soundproofing is a small investment relative to the insight quality gain.

“Does AI transcription accurately capture what people say?”

Modern speech-to-text accuracy exceeds 95% for clear audio in most languages. For colloquial speech with accents, accuracy is slightly lower but still sufficient for analysis.

Minor transcription errors rarely change the meaning of feedback. A customer saying “the exhibit was awesome” transcribed as “the exhibet was awesome” doesn’t change the sentiment analysis.

Crucially, even with 90% transcription accuracy, you’re still capturing 5x more content than typed surveys, so the net information gain remains substantial.

“Privacy concerns—customers won’t want their voice recorded.”

Legitimate concern. Address it through: - Transparency. Clear signage explaining data usage - Consent. Optional feedback (never required) - Data practices. Explicit privacy policies (voice recordings deleted after transcription, transcripts anonymized, no sharing with third parties)

When venues implement voice feedback with clear privacy assurance, participation is high and concerns are minimal.

Real-World Results: Voice Feedback in Practice

Organizations implementing voice feedback systems report consistent patterns:

Museums and Cultural Venues

A major museum comparing voice feedback to their previous comment card system found:

- Participation increased from 6% to 48%

- International visitor feedback increased from 2% of responses to 34% (due to multilingual capability)

- Average response length increased from 18 words to 156 words

- Identification of unarticulated pain points: Voice feedback revealed wayfinding confusion that typed surveys never captured. The issue appeared in 23% of voice responses but in 0% of typed comments (customers never thought to write about it)

Events and Venues

An event venue implementing voice terminals found:

- Satisfaction scores remained similar (NPS improved by 2 points)

- But qualitative insight transformed: Voice feedback revealed that “satisfaction” masked underlying issues. Attendees were “satisfied” but planned not to return due to specific frustrations (parking confusion, sound system positioning) they wouldn’t have articulated in typed surveys

Retail Locations

A retail chain piloting voice feedback discovered:

- Negative feedback increased by 15% (not because satisfaction declined, but because customers more readily expressed criticism via voice)

- Staff development focus shifted: Managers thought staff friendliness was their strength. Voice feedback revealed that efficiency and competence mattered more to customers than warmth

- Specific improvement actions: Voice feedback about confusing checkout process details prompted small UX changes that increased purchase completion rates by 8%

Making the Case: Business Impact of Voice Feedback

Why should your organization invest in voice feedback instead of optimizing your typed survey?

More complete understanding. You capture 5x more raw insight, revealing nuances typed surveys miss.

Higher participation. 40-60% participation means your feedback actually represents your audience, not just the vocal 15%.

Faster improvements. With richer qualitative data, you identify problems faster and solutions more clearly. A voice feedback pattern “the bathroom signage is confusing” is immediately actionable. You don’t need quarterly themes analysis.

Inclusive perspective. You understand your international, low-literacy, and accessibility-dependent visitors authentically, not through the filtered lens of a second language.

Employee accountability. Rich voice feedback about specific staff interactions (positively or negatively) drives behavior change in ways generic satisfaction percentages don’t.

Defensible decisions. “We’re making this investment because 43% of voice feedback mentioned confusing wayfinding” is more persuasive than “we think customers might prefer better signage.”

The Evolution of Feedback Collection

Feedback collection has evolved through clear generations:

- Comment cards (1990s-2000s): Low-tech, high-bias, minimal participation

- Email surveys (2000s): Better reach, worse response rates, sampling bias

- Digital kiosks (2010s): Real-time, quantified, but typing barrier persists

- QR-code surveys (2015-2020): Mobile-friendly, but only captures willing participants

- Voice feedback (2020-present): Captures authentic voice from broad populations in rich detail

Each generation removed friction and increased data quality. Voice feedback represents the maturation of this evolution.

Yet most organizations remain in generations 2-3, leaving significant insight potential uncaptured.

Conclusion: The Case for Voice

The choice between voice feedback and typed surveys isn’t philosophical. It’s empirical.

Voice feedback captures: - 5x more words per response - 3-4x higher participation rates - Authentic expression unfiltered by self-editing - Emotional authenticity and tone - Natural elaboration and reasoning - Genuine inclusivity across literacy, language, and ability levels

Typed surveys remain useful for specific contexts: quick satisfaction pulse checks, when you’re measuring something binary, in time-constrained environments.

But for venues and organizations serious about understanding their visitors—what they think, why they think it, what would bring them back—voice feedback is simply superior.

It’s not marginally better. It’s categorically better. It captures the voice of your visitor in ways typed surveys fundamentally cannot.

The museums, venues, and organizations leading customer experience aren’t collecting more feedback. They’re listening to their visitors more authentically—with voice.

Ready to capture authentic visitor voice? Explore how voice feedback transforms visitor insights, or learn about voice feedback for museums and events.